TorstenFell

With travel restrictions and social distancing in play, virtual training is the most sought-after solution today. In this article, I share 6 immersive self-paced learning strategies to maximize the efficiency of your virtual training programs.

Enhancing Virtual Training Programs With Immersive Self-Paced Learning

While delivering virtual training has been a significant part of corporate training, the COVID-19 crisis has clearly accelerated its need across the world. With travel restrictions and social distancing, L&D teams are looking at approaches to convert the classroom/ILT sessions to virtual trainings.

What Are The Options To Consider As You Convert Classroom/ILT Sessions To Virtual Trainings?

This virtual training transformation can be handled in 3 formats:

- Instructor-Led Training (ILT) to Virtual Instructor-Led Training (VILT) migration

Leveraging the platform to create the required impact and learning gain in the new mode. - Blended training

Building up the VILT sessions with components of self-paced, online training or mobile learning to arrive at the right blend. - Fully online (self-paced training)

Offering highly immersive learning approaches such as microlearning, mobile learning, gamification, and much more.

Remember

In your virtual training transformation from the classroom/ILT mode, you cannot map the classroom/ILT session “as is” to create an identical or better impact and improve virtual training efficiency.

Instead, you need to:

- Use effective digital pedagogy to manage your virtual training transformation.

- Identify which mode will work best (VILT/blended or self-paced, mobile-first/mobile-ready online courses).

- Leverage other strategies that create high motivation, participation, and engagement for remote learners—these could include simulations, gamification, 3D, Virtual Reality (VR), Augmented Reality (AR), and so on.

- Determine the training and learning effectiveness through online assessment strategies.

- Recommend delivery modes and platforms.

The transition to virtual training is a journey where the elements of training may shift gradually from primarily ILT to VILT or blended, and/or ultimately to a fully self-paced training mode.

In this article, I focus on maximizing the virtual training efficiency through 6 immersive self-paced learning strategies.

What Are The Key Benefits Of Self-Paced Virtual Training?

- Flexibility

You can learn when/where you want (especially from home!). - Scalability

It supports the learning needs of small as well as expanding, large remote workforces. - Adaptability

It is available on multiple learning devices, platforms, and operating environments. - Customization

It is easily configurable for different roles/responsibilities. - Personalization

It delivers unique training to each learner as opposed to a one-size-fits-all approach. - Multi-generational workforce support

Boomers, Gen-Xers/Yers, and Millennials can all create unique learning paths to support their individual learning styles.

What Immersive Virtual Learning Strategies Can You Use As You Opt For A Fully Self-Paced Online Mode?

To create effective and immersive virtual learning experiences, consider leveraging a learning and performance ecosystem-based approach for your workforce [1]. This mode works on the principle of continuous learning—rather than discrete learning—and provides value-adds to learners over distance.

Here are 6 immersive virtual learning strategies you can use as you opt for a fully self-paced online mode:

- Capture learners‘ attention regarding training opportunities. Leverage newsletters and teaser videos to highlight the significance of the initiative.

- Build awareness around „What’s In It For Me“ (WIIFM). Highlight what value this training provides to the learners.

- For formal training, opt for immersive learning strategies like:

- Gamification

- AR/VR

- Scenario-based learning

- Interactive story-based learning

- Branching scenarios

- Complex decision-making simulations

- Augment formal training with performance support tools (PSTs) or job aids for knowledge application or to assist the learners at the moment of need.

- Post training,

- Reinforce learning to minimize knowledge erosion (addressing the forgetting curve)

- Continually challenge workers with more complex and advanced learning content

- Provide practice zones where learners can hone their skills

- Reconnect. Provide additional cues through related curated assets to keep the learning journey going.

- Offer social or collaborative learning opportunities so learners can learn through peer networking and other group forums—both within and outside of the work environment.

The unique capabilities of an immersive virtual learning experience help seamlessly connect learners with a broad array of learning content, best practice processes, and supporting tools. They provide a richer and more holistic approach to delivering virtual training, which helps enhance workforce performance.

I hope this article provides the required insights to maximize your virtual training efficiency with self-paced learning strategies. Download the eBook Virtual Training Guide: How To Future-Proof Your Virtual Training Transformation and discover all you need to know for your virtual training transformation endeavor—packed with tips, best practices, and ideas you can use! Join the webinar, too, and learn what is the ideal long-term approach for remote learners.

References:

[1] 5 Questions Answered That Prove You Should Invest In Learning And Performance Ecosystems

Quelle:

Foto: katleho Seisa/gettyimages

https://elearningindustry.com/immersive-self-paced-learning-strategies-to-maximize-virtual-training-program-efficiency

Die App für iOS und Android setzt laut Nexum sowohl auf einen explorativen als auch einen erzählerischen Didaktik-Ansatz und besteht aus drei Kapiteln: einer immersiven VR-Geschichte, einem interaktiven Operationssaal und einem 360°-Videorundgang. In der App werden die Kinder vom „Kinderinsel“-Pinguin „Kimi“ begleitet, der an verschiedenen Stellen in Erscheinung tritt und alles rund um die bevorstehende Operation erklärt.

Mit Virtual Reality der Spitalangst bei Kindern begegnen

Die zweisprachige „Kinderinsel“-App, für die eine Cardboard-Brille zum Einsatz kommt, richtet sich primär an Kinder zwischen fünf und zehn Jahren, bindet aber auch die Eltern bei der Operationsvorbereitung ein. Ein zentrales Feature der App ist daher auch die von Nexum ebenfalls entwickelte und implementierte Mirroring-Funktion, dank der Eltern auf einem zweiten Smartphone verfolgen können, was ihr Kind in der virtuellen Reality sieht, macht und erlebt.

Nexum hat die VR-App eigenen Angaben zufolge über mehrere Etappen konzipiert und realisiert. Unter die verschiedenen Entwicklungsschritte fallen unter anderen die Erarbeitung des didaktischen Ansatzes, die Entwicklung der Story, die Erstellung der Texte, die Kreation und Ausgestaltung verschiedenster 3D-animierter Protagonisten sowie die Programmierung und die technische Implementierung unter Verwendung der VR-Entwicklungsplattform Unreal. Im Vorfeld der Konzeption sammelte die Agentur im Rahmen eines Fokusgruppen-Workshops mit der Zielgruppe Erkenntnisse rund um die Erwartungen und Wünsche an die VR-App und setzt sich über mögliche Ängste im Zusammenhang mit bevorstehenden Operationen ins Bild.

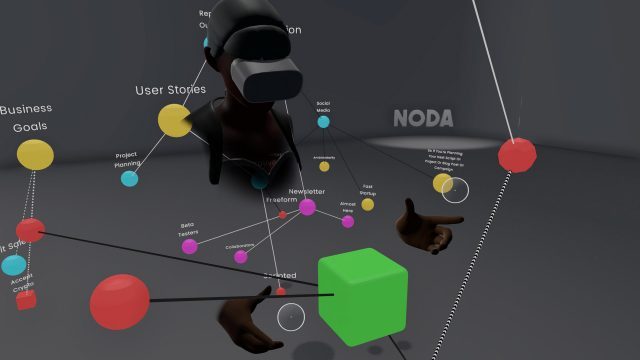

Noda is a ‘mind-mapping’ app that uses VR’s unique affordances for spatial brainstorming and information organization. Launched initially Early Access in 2017, later this year the 1.0 release of the app will add multi-user functionality and switch to a freemium model with core functionality free for everyone. A Quest version of the game is planned for release in 2021.

Noda is a free-form mind-mapping app for VR. Conceptually, mind-mapping is similar to writing an outline to organize your thoughts before writing a paper, but the mind-mapping method typically makes use of spatial relationships to organize ideas rather than a more abstract hierarchy like with a written outline. Noda uses VR to enable mind-mapping in three dimensions and also strives to help you focus on the task at hand by leveraging VR’s ability to take you away from your usual (and sometimes distracting) surroundings.

The app has seen intermittent updates since its Early Access launch on Steam and Oculus PC in 2017, which have added a handful of features not available at launch like speech-to-text input, background images, and ambient music. The app’s interface has matured too, making things cleaner and adding new icons and colors for nodes. Most recently, Noda was updated with video tutorials to make learning the app easier.

Multi-User Feature & Freemium Structure Coming in Q3

Developer Coding Leap says that a major update for Noda is planned for Q3 of this year which will add multi-user support for collaborative brainstorming sessions with other users. Additionally, the app will change to a freemium monetization model where the base app is free for everyone, while premium features can be unlocked for a one time price of $20 (which is the current price of the app).

Fortunately, Coding Leap has confirmed that multi-user support will be available to all users whether they use the free or premium version. All users will be able to spend unlimited time in the app, but premium users will be able to save an unlimited number of mind-maps and also access features like speech-to-text input and specialized music.

Those who have already purchased the Early Access version of Noda will own the full premium version when it launches later this year, Coding Leap says.

Oculus Quest Support Planned for 2021

Coding Leap also plans to bring Noda to Oculus Quest next year. So far the studio hasn’t confirmed whether the app will launch on the Quest store proper, or if it will use the official Quest sideloading process which earlier this year Oculus confirmed would be coming to the headset.

More unannounced features are in the works for the Quest launch next year, which we expect will also find their way to the PC version. We’ve reached out to Coding Leap to confirm if the Quest version of Noda will support hand-tracking as well as multi-user and (if so) if it will be cross-platform with the PC version.

Quelle:

‘Noda’ Mind-mapping App to Get Freemium Multi-user Update in Q3, Quest Version Next Year

Nreal’s smartphone-powered consumer AR headset finally arrive this month in Korea.

Available now for pre-order, the Nreal Light AR smart glasses connect with compatible Android smartphones to bring your favorite apps, such as Instagram, Twitter, YouTube, and Google Chrome, to life in augmented reality. Just as important, the headset features a slim, lightweight frame designed to emulate the look of standard sunglasses, allowing you the opportunity to live that future life without constantly turning heads.

Seeing as the Nreal Light needs an Android device in order to function properly, Korean technology companies Samsung and LG are partnering with Nreal to offer consumers a discounted price on the soon-to-be-released AR headset with the purchase of a Samsung Galaxy Note 20 or LG Velvet smartphone on South Korea’s LG U+ carrier.

The Nreal Light normally retails for 699,000 KRW ($586); when bundled with either device and a 5G plan, however, the price drops to 349,000 KRW ($295).

According to Nreal, users will have access to hundreds of apps upon the headsets launch on August 21st. This includes well-known apps such as Facebook and WeChat, as well as exclusive LGU+ apps designed specifically for use with the Nreal Light. This past March the company even introduced hand-tracking support to its developer kit, allowing creators to interact with their AR experiences with their own two hands.

Owners will receive several useful accessories as part of their purchase, including four magnetic nose pads, a corrective lens frame, and a VR Cover which blocks out all light while wearing the device, essentially turning it into a VR headset.

As previously stated, the Nreal Light features a slim, subtle design similar to that of conventional sunglasses. Despite its small size, however, the headset still manages to deliver an impressive augmented reality experience, thanks in large part to Nebula, a cutting-edge system capable off converting 2D Android apps and converting them into 3D AR experiences. With a 52-degree field-of-view, users can reportedly access “dozens of screens” in real-time, allowing them to watch videos, check messages, and play games simultaneously.

Unfortunately, this deal is exclusive to LGU+ customers in South Korea; at least for the moment. Of course, you can always pick up the headset at full price over at nreal.ai.

Quelle:

Nreal Light AR Smart Glasses Will Be Bundled With Latest Samsung And LG Smartphones

Image Credit: Nreal

Khronos Group, the consortium behind the OpenXR industry standard, today announced that it has begun officially certifying products that correctly implement the OpenXR standard. Additionally, the group has added new extensions to the standard to support hand-tracking and eye-tracking.

OpenXR is a royalty-free standard that aims to standardize the development of VR and AR applications, making for a more interoperable ecosystem. The standard has been in development since April 2017 and is supported by virtually every major hardware, platform, and engine company in the VR industry, including key AR players.

The Khronos Group has announced the OpenXR Adopters Program, allowing any company building an OpenXR product to apply for the official stamp of approval. Once approved, products can use the OpenXR logo on their implementation and also gain patent protection under the Khronos IP Framework.

To ensure that companies are correctly implementing the standard, Khronos Group has published the OpenXR Conformance Test Suite, a collection of tests which companies can use to verify that their OpenXR product is correctly implementing the standard.

The announcements mean that OpenXR is finally ready to be rolled out widely across the XR industry.

“The time to embrace OpenXR is now,” said Don Box, Technical Fellow at Microsoft. “In the year since the industry came together to publish the OpenXR 1.0 spec and demonstrated working bits at SIGGRAPH 2019, so much progress has happened. Seeing the core platforms in our industry getting behind the standard and shipping real, conformant implementations […] is singularly awesome.”

Khronos says that Facebook has shipped an OpenXR implementation for both Quest and Rift, and Microsoft has shipped an implementation for WMR headsets and HoloLens 2. Valve has also published a preview implementation of OpenXR which developers can begin building with.

“OpenXR is designed to enable VR content compatibility on as many devices as possible, giving developers the confidence of knowing they can focus on one build of their VR title and it will ‘just work’ across the entire PC VR ecosystem,” said Joe Ludwig of Valve. “This release is a huge step forward toward that goal, bringing support from two different implementations in the PC ecosystem. With these and more on the way, including our ongoing developer preview in SteamVR, now is the time for developers and engine vendors to start looking at OpenXR as the foundation for their upcoming content.”

Khronos also announced new extensions to OpenXR which expand the standard to support hand-tracking and eye-tracking.

Hand-tracking company Ultraleap has published an OpenXR preview implementation for its Leap Motion hand-tracking peripheral, and high-end enterprise headset maker Varjo has published an OpenXR preview implementation for its eye-tracking headsets.

The end goal of OpenXR is to standardize the way that VR apps and headsets talk to each other. Doing so simplifies development by allowing tool and app developers to develop against a single specification instead of different specifications from various headset vendors. In many cases, OpenXR compatibility means that the exact same app can be run across several compatible headsets with no modification or even repackaging.

Quelle:

OpenXR Now Certifying Headset & App Compliance, Adds Extensions for Hand-tracking & Eye-tracking

The Oculus Quest is one of the hottest VR headsets available on the market right now. Out of the box, the Quest comes with everything you need to get started.

But after a bit of time with the headset, you might be wondering what other add-ons or accessories you can buy to improve your experience and make things a bit smoother. We’ve gathered together some of the best Quest add-ons and accessories right here, ranging from cords to cases and beyond.

[When you purchase items through links on our site, we may earn an affiliate commission from those sales.]

Travel/Carry Case

One of the most notably absent items from the Oculus Quest box is any form of portable storage for the headset. Given that you can damage headset or scratch the lenses by leaving them exposed, getting a case to hold your Quest in is an absolute must.

You have some options, but we’ve found that the official case sold by Facebook can have some problems with the zipper after very little use. Instead, we’d recommend this case from JSVER and this case from Zaracle. Our staff tried both these cases and were happier with the quality and the available room compared to the official case.

Microfiber Cleaning Cloth

It is a real shame a cleaning cloth isn’t included when you buy Oculus Quest since some other headsets ship with it. Cleaning the lenses (here’s a guide for best cleaning practices) can have a dramatic effect on your VR experience and Oculus recommends wiping the lenses down with a dry optical lens microfiber cloth starting from the center of the lens and wiping gently in a circular motion outward. Facebook says alcohol-based wipes and cleaners are not recommended for use on the lenses.

The lenses can be damaged by alcohol, so users should opt to use a dry microfiber cloth instead. If a smudge is being stubborn, though, users can dab a small amount of water on the cloth as well, according to Facebook, but alcohol should not be used on lenses at all. We haven’t tested these particular lens cloths, but there are many available and this 6-pack from Amazon looks like a good start.

VR Power Battery Pack/Counterweight

The VR Power acts as a counterweight and battery pack for the Quest. Strapping onto the back of the headset, it improves comfort by balancing the weight distribution, while also charging the headset while in use. This lets you stay in VR for much longer than you would with the standard Quest battery, and we were considerably impressed in our full review . It may be the best option if you’re looking for a Quest battery pack.

The VR Power is available from Rebuff Reality for $60, but will often be back-ordered due to high demand.

VR Ready PC

This might be the most unconventional accessory on the list, but a VR-ready PC is truly one of the best (and most expensive) Oculus Quest accessories you can buy. As explained above, a VR-ready PC will allow you to play PC VR content on your Oculus Quest when connected via a USB cord. You can also use Virtual Desktop and other wireless solutions, but that might not be as comfortable or reliable as the wired experience.

Most VR-ready PCs composed of high-end or common components used for gaming rigs should work with Oculus Link, but you can double check by comparing your proposed parts or your existing rig against this Link compatibility list from Oculus.

Oculus Link-Compatible Cable

One of the best features of the Oculus Quest is the ability to play PC VR content on your Quest through Oculus Link, which works by connecting your Quest to a VR-ready PC with a USB cord. While you remain tethered to your computer, you can enjoy high fidelity PC VR content on the Quest, essentially allowing you to have the best of both VR worlds.

You can use a variety of different cables with Oculus Link. Facebook sells its own high-quality, thin and flexible 5 meter optical USB 3.0 C to C Oculus Link cord, which is available for $79 on the Oculus Store.

Alternatively, any USB 2.0 cord or higher will work (Oculus do note that ‘your performance can be improved when switching to a USB 3.0 connection’, but don’t give any more specifics beyond that). We’ve written about this $20 PartyLink cable that works well, and Facebook also officially recommends this $13 Anker cable as an alternative to their first-party option.

However, realistically any 2.0 or higher cable from a reliable brand should work fine. You can even use the included USB C cable that comes with the Oculus Quest, if you want. If you do use this cable though (or any cable that is USB C on both ends), you may also need to buy a USB C to A adapter to plug it into your computer if it doesn’t have a native USB C port onboard. The adapter will need to be USB 2.0 specification or higher as well.

Rechargeable Batteries

Multi-Device Wall Charger

If used in combination with the USB rechargeable batteries above, you would be able to use one outlet to recharge your Quest and controllers at the same time.

Chromecast

The Chromecast is a device that allows you to send and play media on your TV from other devices, such as your mobile phone. In this case, a Chromecast will allow you to cast the view from your Oculus Quest onto your TV, so others can watch what’s happening in VR on the TV.

This is a must-have accessory for demoing your Quest, as it allows others to watch what you do in VR and also allows you to watch and instruct others who might be new to the system, as they try it out. You can use your phone or tablet for this as well, of course, but in our experience a TV provides a better viewing experience for multiple people than crowding around a mobile device.

There are two kinds of Chromecasts, the Chromecast and the Chromecast Ultra. The only difference between the two is that the latter allows you to play 4K content. The Quest is not a 4K device, so you probably won’t see any difference when casting the Quest, but if you have a 4K TV then you’re probably better off getting the Ultra so that you can get the most out of other Chromecast media as well.

The Chromecast is typically available for around $35, and the Ultra for $70. For more information on how to use your Quest with your Chromecast, see here.

VR Cover Accessories

VR Cover is a company that’s been around for some time and known for making accessories designed to make headsets feel more comfortable against the face as well as improve hygiene. We tested their Quest-specific covers and some people on-staff love the added comfort and cleanliness, but some others don’t think it makes the Quest that much more comfortable. That being said, it is good to have these on hand if you plan on exercising and getting sweaty with the Quest – you’ll be able to to quickly swap out the covers when things start to get a bit slippery.

They also have newer, cheaper silicone covers now that slide right over the original face pad easily.

Lens Cover

The lenses are one of the most vulnerable parts of the Quest headset — they can be permanently scratched and direct sunlight can be magnified through them, resulting in burnt screen pixels. If you’re looking for something to fit securely into the headset, protecting the lenses, then maybe try this lens protector from Orzero. UploadVR staff reported it works well for the Quest (and even other headsets) and protects the lenses from dust. You may have to turn the headset off fully when not in use, or change its settings, if the lens protector activates the headset’s proximity sensor.

The Orzero VR Lens Protect Cover is available for $10.99.

AMVR VR Stand And Headset Display

This stand is a great option if you want to both display and store your Quest somewhere central in your house, potentially even next to other gaming consoles or your TV. Some of our staff members tried out the stand and were suitably impressed. It can hold your Quest in the center, with the Touch controllers hanging to the side ready to be grabbed. It works for other headsets as well.

The stand is available on Amazon for $25.99.

Official Headphones or Kiwi Earbuds

All Oculus Quests include an audio system that releases sound from the head strap area. This system works decently because you don’t have to position anything inside or over your ears to hear immersive sound. Still, a lot of detailed sounds are lost with this system. Did you know, for instance, there are ambient sounds in the home area of Oculus Quest? You might not hear that with the out-of-the-box audio experience on Quest. For $49, Oculus sells official wired headphones that come in two completely separate pieces with very short cords. There are headphone jacks on both sides of the Quest so these headphones are ready-built to plug into both of these ports and provide you a more private and immersive sound experience.

While the official earphones are one option, these Oculus Quest earbuds from Kiwi offer a cheaper alternative with a few sacrifices. Sporting the same dual-cord design as the official headphones, the Kiwi headphones won’t give you the best sound quality around, but they are considerably cheaper than the official alternative. If you want some cheap headphones for your Quest, these are a good option. You can read our full review here.

The Kiwi Oculus Quest Earbuds are available on Amazon for about $20.

Hand Straps

The Kiwi Design Knuckle Straps are available for $19.99, however we’ve also heard good things about the AMVR versions for $30 too. There are plenty of straps in this style available on Amazon.

Quelle:

Immersion bezeichnet das Abtauchen in eine Situation. Sie wird ermöglicht durch den Einsatz von Technologien, die uns von der realen Welt abschirmen und die aktuell vor allem zwei Sinneskanäle ansprechen: Das Auge und das Ohr. Schon heute wird Immersion durch Technologie z.B. im medizinischen Kontext zur Phobiebehandlung erfolgreich eingesetzt. Zukünftig werden durch die Integration von mehr Bewegung, Berührung und Geruch stärkere immersive Erfahrungen möglich.

#lernvontorsten – 006 – Was ist Immersion? – die Sinne als Tor zu einer neuen Welt

Abonniere gleich den Kanal und erhalte jede Woche neue Impulse und Erklärungen.

#vr #ar #xr #mr #virtualreality #augmentedreality #360degree #immersion #immersivelearning #spatialcomputing #learning #lernvontorsten #corporatelearning #transfer #torstenfell

Quelle:

Torsten Fell, Institute for Immersive Learning

British technology company Immerse has been working on its virtual reality (VR) training solution, the Virtual Enterprise Platform or Immerse VEP for the past six years. It has been available to select clients such as BP, Shell, DHL, GE Healthcare and Facebook for the last three, today seeing the platform’s official launch for other companies to adopt.

Immerse VEP is an open VR platform designed to help businesses create their own training solutions which can then be measured and scaled as required. Content can then be deployed to hundreds of headsets like Oculus Quest or HTC Vive as the software is hardware agnostic.

Clients can import existing VR content if they have any or use Immerse VEP’s content creation tools’ software development kit (SDK) to build their own. Once deployed companies can then gather training data – with Immerse saying ’30 data points per user per second’ are captured – to further improve performance.

“We have been working closely with our clients to create an enterprise VR platform that really answers the needs of businesses and is truly flexible, usable and scalable,” said Justin Parry, Co-founder and COO of Immerse in a statement. “VR is such a powerful learning tool, and we want to make it available to as many businesses as possible, especially at a time when technology for effective remote-learning is so critical and businesses are seeking ways of building the resilience of their workforce.”

“Any VR content can be used on our platform, meaning it can be securely deployed to all employees via standalone VR headsets, with data gathered centrally,” Parry continues. “This user flow is groundbreaking: it simply doesn’t exist anywhere else. Many business leaders and training professionals already recognise the transformative power of VR, but a solution for enterprise needs to be practical, results-driven, and long-term. This platform has the potential to push VR into the heart of enterprise, making it accessible on a whole new scale.”

Immerse offers a free trial of the VEP SDK to get potential clients acquainted with the system. Training is a major accelerator in the commercial adoption of VR technology, with others in the field including ElevateXR and Bodyswaps.

Quelle:

https://www.vrfocus.com/2020/08/immerses-virtual-enterprise-platform-opens-its-doors-to-companies-worldwide/

As futuristic as it sounds, early-stage applications of the Spatial Web or Web 3.0 are already here. Now is the time for leaders to understand what this next era of computing entails, how it could transform businesses, and how it can create new value as it unfolds.

Introduction

The once-crisp line between our digital and physical worlds has already begun to blur. Today, we hear of surgeons experimenting with holographic anatomic models during surgical procedures.1 Manufacturing, maintenance, and warehouse workers are measuring significant efficiency gains through the use of the Internet of Things (IoT) and augmented reality.2 Cities are creating entire 3D digital twins of themselves, helping to improve decision-making and scenario-planning.3 Still, there’s a sense that we’re not “there” yet.

Today’s technology applications are just glimmers of the emerging world of the Spatial Web, sometimes called Web 3.0, or the 3D Web (see sidebar, “Emerging definitions: Web 3.0 and the Spatial Web”). It is the next evolution in computing and information technology (IT), on the same trajectory that began with Web 1.0 and our current Web 2.0. We are now seeing the Spatial Web (Web 3.0) unfold, which will eventually eliminate the boundary between digital content and physical objects that we know today. We call it “spatial” because digital information will exist in space, integrated and inseparable from the physical world. (To read an example of how it might work in reality, see the sidebar, “A vision of the Spatial Web in health care.”)

This vision will be realized through the growth and convergence of enabling technologies, including augmented and virtual reality (AR/VR), advanced networking (e.g., 5G), geolocation, IoT devices and sensors, distributed ledger technology (e.g., blockchain), and artificial intelligence/machine learning (AI/ML). While estimates predict the full realization of the Spatial Web may be 5–10 years away, many early-stage applications are already driving significant competitive advantage.4

We are now seeing the Spatial Web unfold, which will eventually eliminate the boundary between digital content and physical objects that we know today.

By vastly improving intuitive interactions and increasing our ability to deliver highly contextualized experiences—for businesses and consumers alike—the Spatial Web era will spark new opportunities to improve efficiency, communication, and entertainment in ways we are only beginning to imagine today. For forward-thinking leaders, it will create new potential for business advantage—and, of course, new risks to monitor.

In this article, we will define the vision for the Spatial Web, discuss the technologies it is built upon, and describe the path to maturity. The goal for most companies is not to build a Spatial Web; however, understanding its capabilities can help leaders better prepare for the long term, get more out of their current investments in the short term, and participate in critical conversations happening today that could decide how this coming era affects both business and society.

EMERGING DEFINITIONS: WEB 3.0 AND THE SPATIAL WEB

“…the world around us is about to light up with layer upon layer of rich, fun, meaningful, engaging, and dynamic data. Data you can see and interact with. This magical future ahead is called the Spatial Web and will transform every aspect of our lives, from retail and advertising, to work and education, to entertainment and social interaction.” —Peter Diamandis5

There is no single definition for Web 3.0, the computing era that follows our current, mobile-powered Web 2.0. Many people identify Web 3.0 with the Semantic Web, which centers on the capability of machines to read and interact with content in a manner more akin to humans.6 Recently, definitions of Web 3.0 have begun to include distributed ledger technologies, such as blockchain, focusing on their ability to authenticate and decentralize information. Theoretically, this could remove the power of platform owners over individual users.7

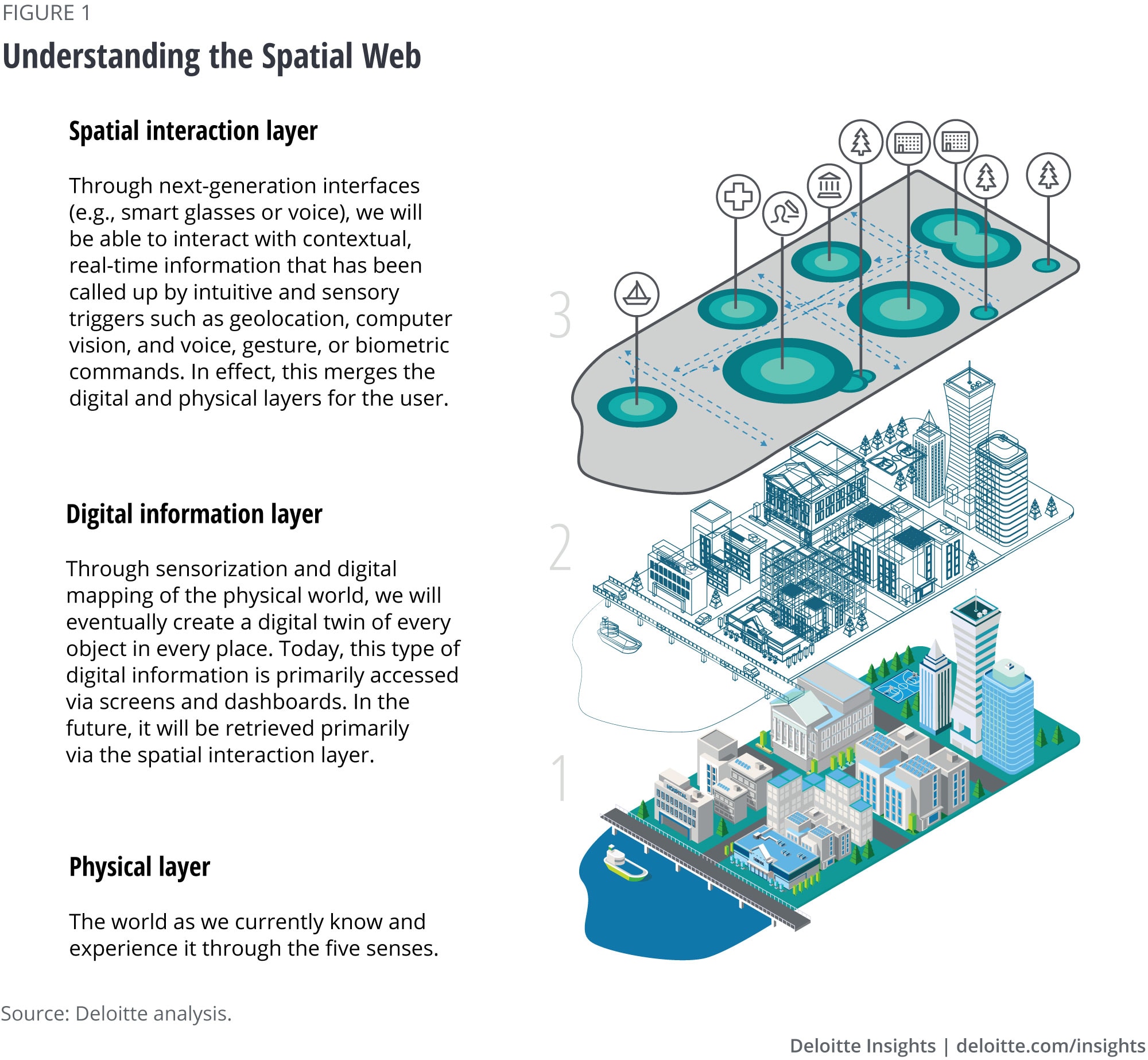

Each of these perspectives begins to describe a similar end state; they just start from different technology vantage points. We use the term “Spatial Web” because it emphasizes the shift in experience for the end user by transferring interaction with information away from screens and into physical space (figure 1).

A VISION OF THE SPATIAL WEB IN HEALTH CARE

Step a few years into the future, where connectivity, processing power, digital devices, and our ability to analyze and contextualize data have advanced considerably. In this world, much of our interaction with digital information happens away from traditional screens, tablets, and phones. Here, we meet a leading heart surgeon and researcher of cardiovascular health. She is starting her day, not by checking her phone, but by turning on her hands-free, intelligent interface.8 This advanced device curates multiple media channels that filter contextual information into her field of view, from social media and the news to her work schedule and secure patient information. This morning, she uses it to call a self-driving car to take her to the hospital; on the way, she attends a brief, holographic video conference with her child’s teacher. As the car reaches the hospital, the device shifts settings to enable a secure and rich mixed-reality medical environment, lowering the priority of notifications from her personal life.

She begins work by digitally “scrubbing in” for robotic surgery on a patient thousands of miles away.9 In this procedure, she will virtually guide her onsite human and robotic colleagues, who are present with the patient in the physical operating room. She’ll administer the procedure using combinations of “see-what-I-see” features, haptic-enabled and custom 3D-printed surgical instruments, and hands-free digital models. But before they begin, the team virtually convenes around a 3D digital twin of the patient’s heart.10 This exact digital replica has been a valuable tool in helping establish a surgical plan; thus far, it has been used to collaboratively monitor the patient’s condition, customize the surgical implants,11 and help the patient visualize the procedure. As the team moves into surgery, this digital twin provides real-time, AI-supported insights on the patient’s condition, poised to alert the surgical staff to potential alternate interventions. Fortunately, this surgery goes as planned; our surgeon successfully completes the procedure, and onsite colleagues close the patient for recovery.

As the team finishes, data from the procedure is collected, analyzed, and collated for a variety of purposes, based on the need and security permissions of whoever is accessing it. It will be used to support the individual patient’s postoperative care team; other parts of the health system may simultaneously draw off the same database using the billions of data points to help monitor public health and system capacity, run simulations, and improve outcomes.12

We are already seeing the early signs of this imagined future, although the interconnected network required across patient care, R&D, hospital systems, and other supportive industries may be a number of years away. However, we can see the value of these new interfaces and digital threads intertwining seamlessly for more effective results, both for the individual and the system. This integrated physical and digital network is expected to be constructed over time, built on the convergence of advanced technologies layered and designed both securely and interoperably.13

Building the Spatial Web

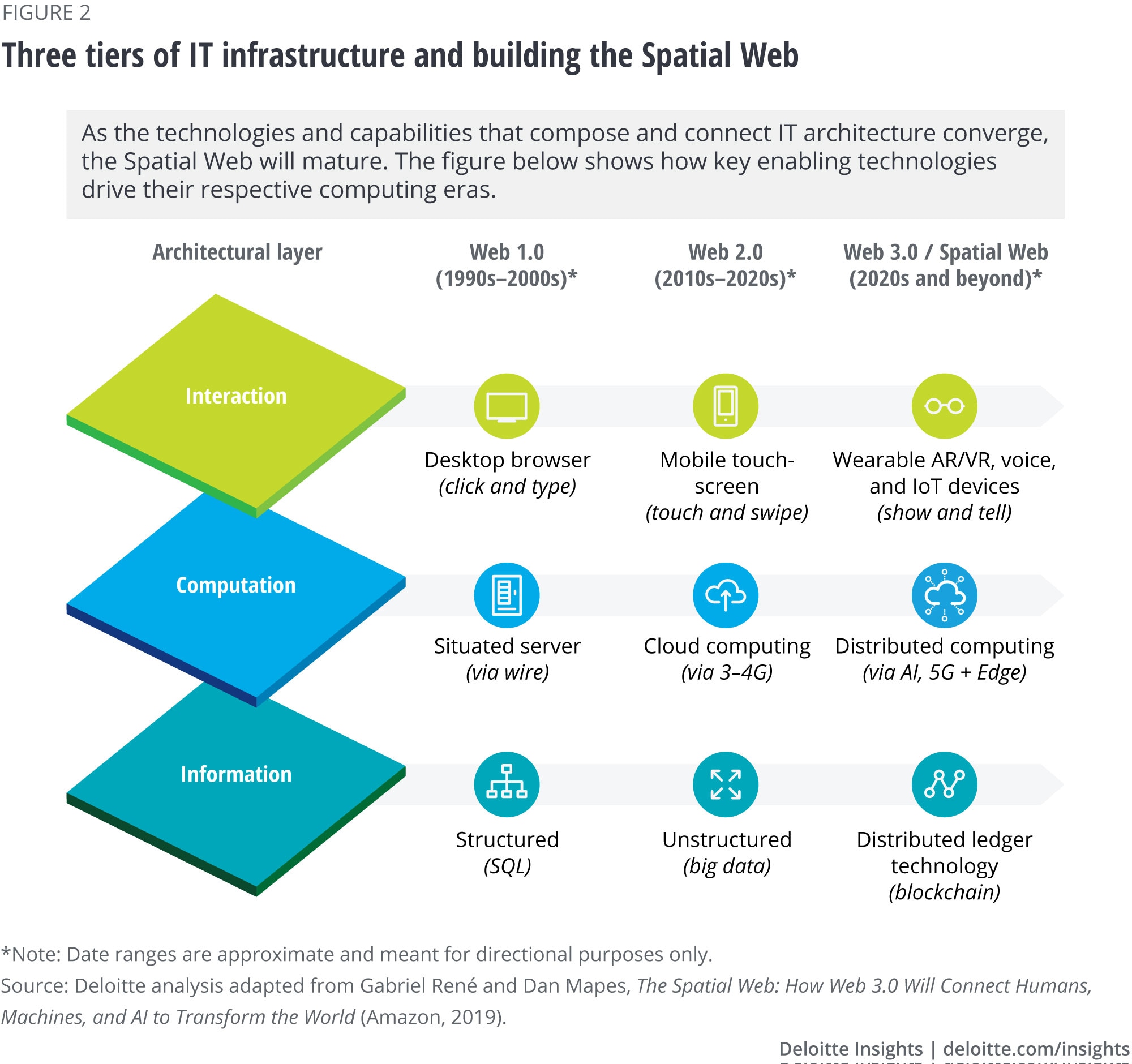

While we can’t predict precisely when Spatial Web maturity will arrive, the trend line toward this future has been emerging for decades. Just as earlier capabilities gave rise to Web 1.0 and Web 2.0, today’s leading technologies are fueling and informing the evolution into the Spatial Web as they advance across the three basic tiers of IT architecture (see figure 2):

- Interaction: The software, hardware, and content that we ultimately interact with

- Computation: The logic that enables the interaction

- Information: The data and structure that allow computational functions to be completed accurately, efficiently, and securely

Gabriel René, executive director of the Spatial Web Foundation, notes: “Downstream of these technology investments are the combinatorial benefits that come when you are not implementing them entirely separately, but as part of a larger strategy. This is how we upgrade to Web 3.0.”14

“Downstream of these technology investments are the combinatorial benefits that come when you are not implementing them entirely separately, but as part of a larger strategy. This is how we upgrade to Web 3.0.” —Gabriel René, executive director of the Spatial Web Foundation

Interaction: AR/VR devices are expected to be a primary gateway for humans to access the Spatial Web, although form factor may eventually range from AR glasses or digital contact lenses to haptic wearables, IoT devices, sensors, robots, autonomous vehicles, and beyond. For the Spatial Web to become widely adopted, AR interfaces in particular will need to become more affordable and comfortable to wear for long periods of time.

In recent years, significant investments have occurred in this area. Traditional incumbents, such as Google,15 are continuing to develop and evolve AR hardware. Facebook—an active participant in the VR space since its US$2 billion acquisition of Oculus VR in 2014—has recently made a slew of investments focused on AR and the AR Cloud, including a project called Live Maps that will reportedly create shared 3D maps of the world.16 Apple17 has been developing its own light-weight AR glasses and has applied for a series of patents that could significantly reduce the size of such AR devices.18

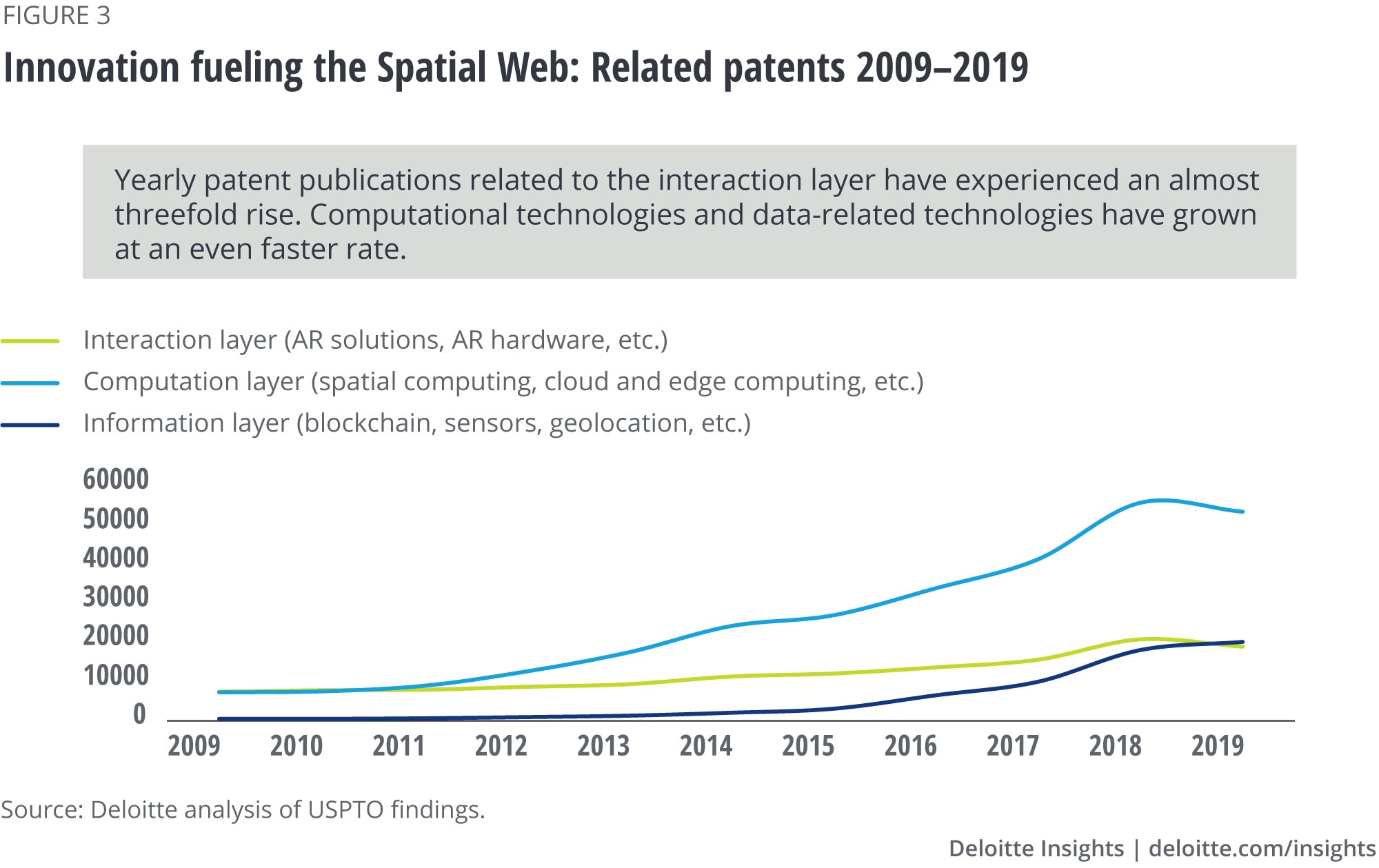

Zooming out to look at the broader tech industry, we see upward trends in innovation and development of technologies supporting the interaction layer of the Spatial Web. For example, the number of AR-related patents published yearly in the United States grew more than threefold over the last ten years (see figure 3).19

Computation: AI/ML will play a foundational role in Spatial Web computation. It enables machines and devices to understand the nondigital world, for example, via computer vision and natural language processing. It will also drive contextual, personalized experiences via AI’s ability to self-program, continuously learn, and make contextual decisions. This will be critical for Spatial Web maturity, and it will require immense amounts of processing power. In addition, to rapidly and securely transmit rich, high-definition, contextual media experiences from physical objects to a computation layer and back to the end user, extremely fast network connectivity will be required. All of this will depend on high-bandwidth networks and more distributed locations for computing, making 5G connectivity and Edge computing core enablers.

Edge computing helps to reduce latency by decreasing the distance between the device and a cloud-based processor.20 5G, which can enable download speeds up to 100 times faster than 4G,21 has seen a high level of investment in recent years, driving predictions that the number of connections could grow from roughly 10 million in 2019 to over 1 billion in 2023, representing just under 10% of all mobile device connections.22 Recent economic shifts may slow this over the short term; however, as of January 2020, 5G had already been deployed in 378 cities across 34 countries.23

A key infrastructure to support this level of computation is the AR Cloud (see sidebar, “AR Cloud and 3D-mapping the physical world”).24 This will require technology ranging from machine vision to 3D modeling technologies that will allow the creation, positioning, and anchoring of digital content over physical objects.

Information: Data sources and types are increasing constantly and will only accelerate as sensorized devices proliferate. This makes privacy a critical consideration, and it is why many consider distributed ledger technologies, such as blockchain, to be foundational.25 Through built-in immutability, data integrity and security are ensured, allowing platforms or companies to incorruptibly manage access and identity control.26 Because of this, blockchain’s authentication abilities can enable open ecosystems, without restricting users, as many platform-based applications do today. This ability to decentralize spurs the hope that the Spatial Web will realize the vision of a truly open and democratized internet.27

Apart from its security capabilities, blockchain also plays a role in managing entities in the physical world—from buying location-based digital real estate to managing nonprivate spaces such as parks and even nongovernmental locations such as oceans. One company, XR Web, has already started selling spaces on the Earth’s digital layer.28

All told, this is a story of technology converging across the three layers of IT. We see measurable innovation across all three tiers discussed in this section. Patent publications are typically considered a good indicator of innovation activity and investments, and applications related to Spatial Web technologies have shown clear growth over the last decade (see figure 3). While not all of these patents are exclusive to the Spatial Web, every innovation can help in its eventual realization. Furthermore, Spatial Web–specific patents (those that specifically mention Spatial Web, AR cloud, or 3D Web) have demonstrated a tenfold increase in the last 10 years.29

AR CLOUD AND 3D-MAPPING THE PHYSICAL WORLD

The AR Cloud is a key enabler of the Spatial Web; some groups even use both terms interchangeably. According to the Open AR Cloud association (OARC), the simplest definition of the AR Cloud is a 3D digital copy of the world.30 By creating 1:1 scale digital models that are machine-readable, updated in real time, and associated with precise geolocation information, spatial experiences can become richer, more accurate, and more connected. Ultimately, its creation helps enable our ability to fully erase the line between digital and physical objects. Today, a variety of companies are working toward its development.

Maps of physical spaces and digital twins will be created for everything: cities, rooms, retail spaces, public areas. Once maps are built, locations could be defined in space and new types of transactions and interactions become possible. As people and objects begin to move through these maps, it should become possible to gather a wealth of previously unavailable information about people and processes: how they gather, move, and interact, and which experiences they find useful.

Getting from here to there: A path to the mature Spatial Web

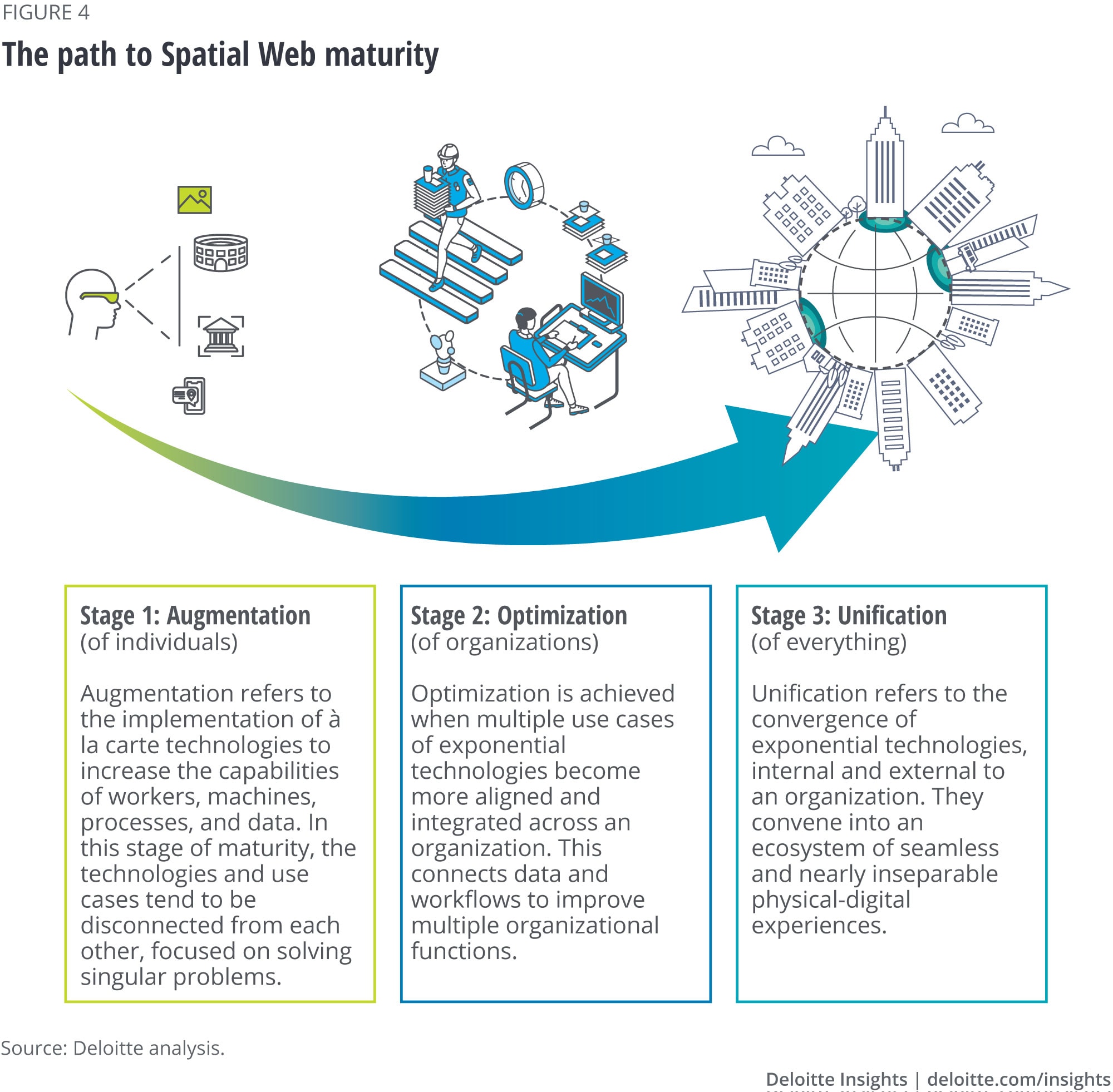

As described in the previous section, the Spatial Web will require advances in all three tiers of IT infrastructure to come to full fruition. The path to maturity can be viewed in three general stages: Augmentation, Optimization, and Unification (see figure 4). While the Unification stage is still estimated to be a few years away, many companies are already generating value through the Augmentation and Optimization phases.

In the first stage, Augmentation, organizations implement technologies to “augment” the capabilities of workers, machines, and processes. They tend to be disconnected from each other, and workflows remain largely the same as before.

Most industries are already at this stage, implementing technologies such as AR to support frontline workers in manufacturing, maintenance, and field service;31 VR learning to support difficult, expensive, or dangerous skill development; 32 and the IoT to drive predictive maintenance.33 These use cases are already demonstrating valuable returns for companies—for example, one company compared AR line-of-sight instructions to using a traditional manual for wind turbine assembly. Using the AR workflow, technician performance improved by 34%.34

Through successful applications, organizations lay the foundation for the second stage, Optimization, where use cases become more integrated and cross-functional. For example, in earlier stages of maturity, a digital twin could allow factory engineers to move from reactive to predictive equipment maintenance.35 As the organization becomes more sophisticated, it begins to integrate data and identify opportunities for cross-functional optimization via a broader asset performance management (APM) system. APM helps inform not just maintenance timing, but also operational procedures, and material and part procurement, which can lead to gains across the enterprise—from material spend to savings on insurance premiums resulting from deep reductions in catastrophic failures.36

In another example of organizational optimization, Wayfair saw the opportunity for AR to help customers visualize and place furniture in their homes.37 While the company measured significant boosts in conversion and reduced returns as a result of the experience, the time and effort in creating high-quality 3D product models spurred it to look for additional uses. Today, almost its entire online catalog is created using those 3D models, instead of standard photography. The level of cost savings from catalog and marketing asset production has unlocked value and further investment in 3D experiences across the company.38

The third stage, Unification, is when the more complete vision of the Spatial Web emerges, as technologies and ecosystems converge. While the earliest manifestations have been seen in gaming, a number of 3D-specific startups such as Ubiquity639 and WRLD,40 in addition to long-standing companies such as Esri,41 are actively developing spatial capabilities for broader enterprise and consumer applications. Ultimately, these companies are looking for a platform that helps everyone move seamlessly from context to context, with the right data and experiences available at the right time and location.

There is a lot of conjecture about how the mature Spatial Web will manifest. Possibilities range from a completely open-source, democratized Spatial Web that anyone can join (irrespective of device) to platform-defined, walled-garden Spatial Web(s) that are owned and governed by a small number of large companies. The way technology advances and which group defines the Spatial Web will have enormous influence over how this new world unfolds—and the vast amounts of data it will generate.

Many early proponents are hopeful that the mature Spatial Web will embody a return to the early vision of “universality,” inspired and driven by World Wide Web inventor Tim Berners-Lee.42 They argue that Web 1.0 became possible and valuable because of the network effect and innovation its openness welcomed through the establishment of open web standards. Although Web 2.0 made user-generated content easier and, some may say, democratic, the heavily private and app-driven networks have foregone openness.43 This has made it difficult for users to switch platforms and has reduced the interoperability of today’s digital interactions. Many argue this impedes innovation and consumer control.44 This is why at this early stage of the Spatial Web/Web 3.0, a number of groups, including Open AR Cloud, IEEE, and the Spatial Web Foundation, are pushing to create open standards that will realign behind a decentralized and democratic set of values.45

Standards-setting may sound dry, but it is central to determining the future of the Spatial Web—and who will control the vast amounts of data it generates. The outcome will have significant implications economically, socially, and ethically (see sidebar, “Ethics and privacy challenges in the Spatial Web”). This is why it is so important for all types of companies to participate in creating these standards.

ETHICS AND PRIVACY CHALLENGES IN THE SPATIAL WEB

Experiences available on the Spatial Web could sway our view of reality to a degree never before seen. Ethical issues related to fair and responsible data usage, as well as privacy, ownership, security, and authentication are paramount.

This is a complex and challenging topic that cannot be covered fully here. To learn more about Deloitte’s perspective on data privacy, security, regulation, and ethical usage, we recommend reading the following articles as a starting point:

Recommendations: Where to begin for business leaders

In the coming years, business strategies and consumer behaviors will evolve around the Spatial Web’s growing ability to deliver intuitive interactions with highly contextual and personalized information. Most businesses aren’t going to build their own Spatial Web; they will participate in it as it becomes the next major era in computing, analogous to how Web 2.0 capabilities have driven new mobile behaviors and ways of working.

Many business leaders may get the impression that this evolution is too far off to warrant attention. However, there are important actions to be taken today to prepare for, benefit from, and shape this new era as it unfolds. While the best entry points may vary by industry segment, the following actions will be beneficial for most:

- Build with the future in mind. Most large companies have already started working with many of the technologies enabling the Spatial Web, but often they aren’t building with that end-state in mind. This can cause them to miss valuable efficiencies. For example, start looking for ways to streamline and connect 3D assets—if you’re a manufacturing company, bring 3D product models from product ideation to factory technician training, all the way through to marketing and customer support.

- Experiment with IoT and location-based sensors. Tapping into sensor data enables a business’s operational awareness, which, in turn, can yield optimized operations. Learning to manage data from sensors—whether from retail stores’ camera feeds, trackers on trucks, or infusion pump sensors in hospitals—helps prepare the business for handling the volume of data, and also helps them begin to benefit from the insights they can provide. An increasing variety of sensors will become key inputs for Spatial Web users.

- Map out your business. Whether it’s modeling large facilities for wayfinding, having a digital twin of your brick-and-mortar store shelves and inventory, creating geographical models to optimize logistics, or creating a digital twin of the manufacturing line, it’s going to become increasingly important to have a digital representation of your business and the location of its elements. This helps to lay the groundwork for monitoring and optimizing by using its digital equivalent.

- Insist on interoperable, ethical standards. The Spatial Web is a convergence of emerging technologies. Both established and new organizations are already starting to establish standards to enable interoperability across applications. These organizations and the resulting standards efforts can be strengthened by support from the business community. Jan-Erik Vinje of Open AR Cloud group urges, “Now is the time to get that perspective … and also speak about the way we think about this future and what values should be the North Star when making this technology if we want to make it benefit as many people as possible and be a good engine of economic growth and technological and societal development.”46

“Now is the time to get that perspective … and also speak about the way we think about this future and what values should be the North Star …” —Jan-Erik Vinje, managing director and co-founder of Open AR Cloud group

Truly transformative technologies enable new use cases, and without question we’ll be telling different stories about the Spatial Web five years from now. But by participating with this vision in mind from the beginning, your company may be better positioned to tell that story instead of having it told to you.

Quelle:

https://www2.deloitte.com/us/en/insights/topics/digital-transformation/web-3-0-technologies-in-business.html?id=us:2em:3na:4di6645:5awa:6di:MMDDYY::author&pkid=1007277https://www2.deloitte.com/us/en/insights/topics/digital-transformation/web-3-0-technologies-in-business.html?id=us:2em:3na:4di6645:5awa:6di:MMDDYY::author&pkid=1007277