Defining spatial computing

During his 2022 re:Invent conference keynote, Amazon CTO Werner Vogels said that “3D will soon be as pervasive as video” and that “3D technology has permeated our world.” My spatial computing colleagues and I at Amazon Web Services (AWS) were excited to hear this, given how much each of our careers has revolved around 3D technology.

I come from a 3D and immersive technology background. I spent 20 years at a US Navy research and development lab in San Diego, first building up computer vision and automated target recognition technologies to support maritime domain awareness, and then most recently building out one of the largest Navy mixed reality labs. When I came to AWS, I heard the term “spatial computing” for the first time as I was interviewing. Initially, I felt the term was obscure and infrequently used, at least in the augmented/virtual reality and immersive technology space. However, in the seven months that I have been at AWS I have now heard the term more often, both internally and externally, so it has become part of my vocabulary. However, I still wonder how well it is understood and how frequently it is used by others. So, for the purposes of this blog post, I feel I should start by defining it from my perspective: I believe that spatial computing is the technology that powers immersive 3D experiences.

My colleagues and I view spatial computing as a blending of the virtual and physical worlds which enhances how we visualize, simulate, and interact with 3D data combined with location. While this definition works for my team, I know some readers might feel that other definitions work better for their business areas and use cases. For example, in Bill Vass’ (VP of Engineering for AWS) inaugural spatial computing blog post, The Best Way to Predict the Future is to Simulate it, he described it as the computing that powers collaborative experiences. He goes on to further state, “It is defined as the potential digitization (or virtualization, or digital twin) of all objects, systems, machines, people, their interactions and environments.” Still, some readers may disagree with that description. The reality is that spatial computing is an emerging technology and time and adoption will ultimately drive a consensus definition. For now, let’s consider how the current spectrum of spatial computing experiences are setting the stage for the vision that Werner Vogels shared at re:Invent, and how that might help us arrive at a more consensus definition.

We are starting to see the merging of a number of technologies in this broader technology space such that definitions are getting more and more complicated. Beyond the already complex task of trying to answer “what is spatial computing?” many closely related technologies are equally difficult to define, yet they often overlap with the definition of spatial computing. For example, what is the Metaverse? What is immersive technology? How does AI/ML fit in? IoT? Blockchain? 3D? The digital twin? Not to mention the actual dictionary definitions of augmented, mixed, and virtual reality versus how the public and media use the terms often interchangeably. How many times have technologists in this industry seen a news article showing a picture of a virtual reality headset and a headline talking about augmented reality? A photo frequently used in news articles talking about my former Navy mixed reality lab would show the photo below of a soldier wearing a virtual reality headset, but interacting with information in front of him as if using augmented reality.

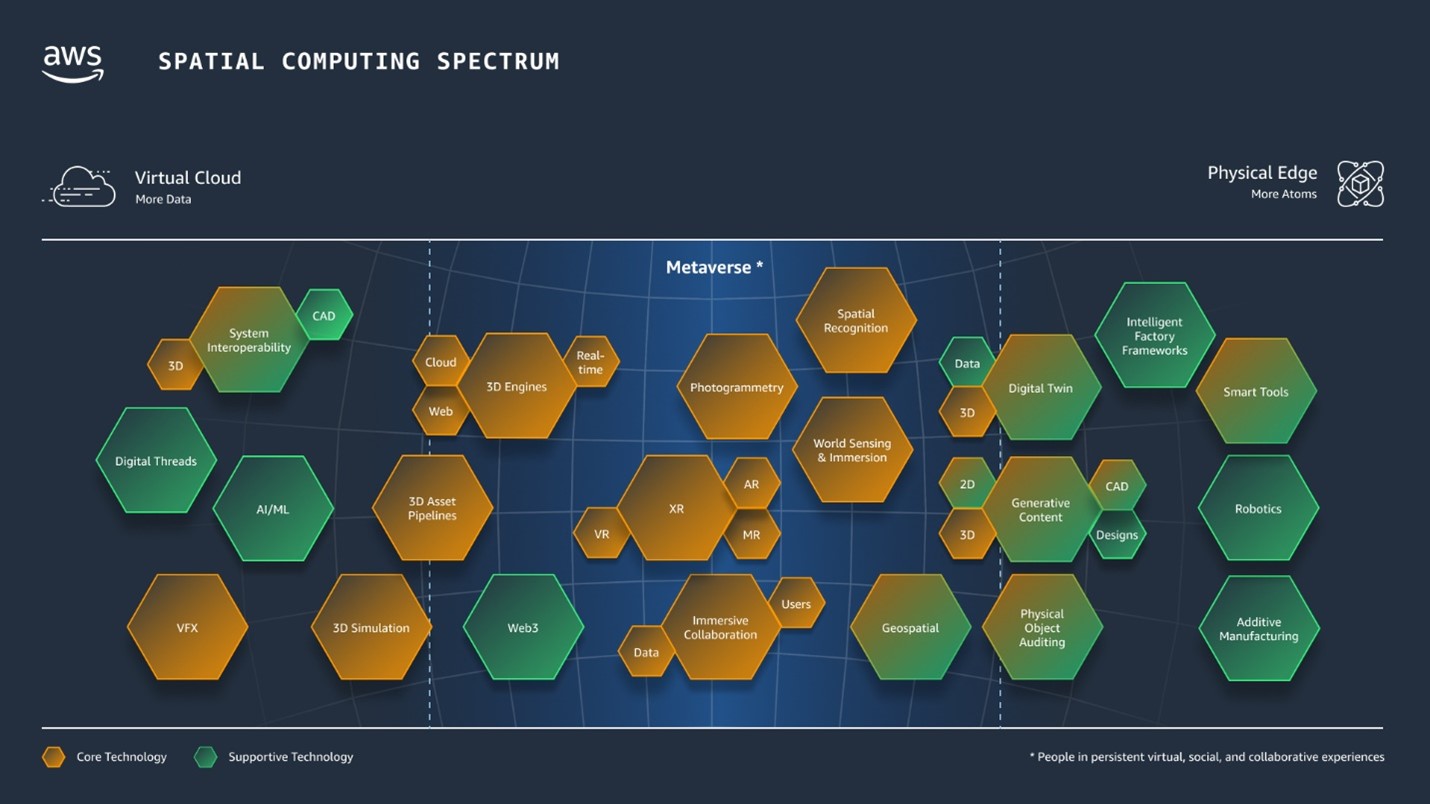

Because of so many fluid and confusing definitions being used in the technology space and in the media, my colleagues and I have a number of opinions regarding the definition of spatial computing and the technologies it supports. As a thought exercise, my team crafted the diagram below of what we call the Spatial Computing Spectrum. Our current challenge is around whether or not we should put spatial computing on a spectrum at all. Is it linear? Would it make more sense in 3D? Should we animate it? Should it be represented with a number of digital threads? All that aside, I think the information and technologies described in it is accurate.

AWS’ Spatial Computing Spectrum

Spatial computing powers quite a few technologies, as one can see from the number of hexagons in this diagram. Each hexagon represents a different technology that might be used to build a spatial experience. Through the lens of Bill Vass’ definition where spatial computing is the “potential digitization of all objects, systems, machines, people, their interactions and environments,” the spectrum of spatial computing represents technologies that might enable this digitization, either virtually or physically. On the left-hand side of the spectrum we have technologies that are more virtual or cloud based (more data). On the right-hand side we have technologies that are more at the physical edge (more atoms). While not every spatial experience will use each of these technologies, when placed on a spectrum like this, it becomes easier to see how the combination of virtual and physical can support different spatial computing use cases.

We were asked recently why CAD is at both ends of the spectrum and why digital twin was on the physical edge (the right-hand side) of the spectrum. The answer to that is simply that the references to CAD and digital twin on the right are associated with real-world items; actual physical things like factories, vehicles, physical objects that you can touch. Whereas references to CAD on the left refer more to the software programs and the data themselves. Thinking about a use case like augmented reality design collaboration for a manufacturing customer, the final output has yet to be defined, and is purely virtual and data driven. Alternatively, a use case such as using digital twins to monitor a real-world factory would necessitate being tied to the physical “thing itself.” By aligning on how the technology is used we can decrease the complexity of defining something as broad as spatial computing. But what about the other complex definitions I mentioned? How might this spectrum help us to define what the metaverse is?

Defining the metaverse

As you can see from the diagram, my team views the metaverse as a combination of a number of technologies roughly in the middle of this spectrum. Now, as I mentioned before, the definitions in this space are very fluid, and many groups have yet to arrive at a consensus definition for metaverse. I am sure many readers will find technologies missing in this diagram, or feel certain technologies should move further left or further right, or should or should not be lumped into the center metaverse area. I think these differences in perspective highlight how much this space is evolving right now (both spatial computing, the metaverse, and supporting technologies) and how fast the technology domain is changing.

The definition of the metaverse, or the central region of the diagram, is a difficult one for many. Other teams in this technology space have had discussions on what it is and what it is not, just like our teams at AWS have had. It is hard to define and hard to talk about. At some conferences people jokingly say that they do not want to say the word “metaverse” out loud since there is so much controversy and ambiguity around it. While it may be difficult to define the metaverse, I think we all can agree on at least some of the technologies that will power it; those are referenced in our Spatial Computing Spectrum diagram.

Spatial computing and simulation

In Werner Vogels’ 5 tech predictions for 2023 and beyond he wrote, “Like video did for the internet, spatial computing will rapidly advance in the coming years to a point where 3D objects and environments are as easy to create and consume as your favorite short-form social media videos are today… With the increasing integration of digital technologies in our physical world, simulation becomes more important to ensure that spatial computing technologies have the right impact. This will lead to a virtuous cycle of what were once disparate technologies being used in parallel by businesses and consumers alike. The cloud, through its massive scale and accessibility, will drive this next era.” Similarly, in the blog post I previously referenced by Bill Vass he quipped, “I like to say, if you’re not simulating, you’re standing still.”

My team and I believe that simulation is going to play a key role in the spatial computing domain. Werner Vogels’ re:Invent keynote focuses heavily on simulation. Here he introduces a new AWS service called AWS SimSpace Weaver, a tool for running massive spatial simulations without managing infrastructure while tying it back to the concept of spatial simulation. He concludes by saying that he wants us to walk away with the message of “use simulation”, and “simulate everything”. Bill Vass expands on this idea further: “The digital twin is a game changer for businesses. In the same way that businesses bought personal computers for the first time in the 1970s and 80s to run spreadsheets, digital twins allow organizations the new ability to analyze and simulate complex operations. Digital twins allow businesses to model and simulate the other 90% of the business that spreadsheets can’t.” In Werner Vogel’s keynote he talks about this fusion of models and sensors and data when he shows a demo of AWS IoT Twinmaker, a tool which helps a company optimize operations by easily creating digital twins. It is technologies like these at AWS that help bring the simulation tools directly to the user, empowering them to use their own data to gain valuable insights for their businesses.

The marriage of digital twins, 3D content, and simulation will be a powerful capability for all businesses. Bill Vass continues by stating: “For businesses that want to move forward, digital twins are the natural progression. There are hundreds of billions of physical world resources such as vehicles, buildings, factories, industrial equipment, and production lines that will benefit from this next chapter in spatial computing powered by AWS.” It is almost difficult to imagine a business that would not benefit from one or all of these technologies. Again, all of these technologies are enabled by, or powered by, spatial computing.

Building at AWS with Spatial Computing

In my time at AWS I have learned that this is a company of builders and my team believes whole-heartedly that we should innovate and invent on behalf of our customers. Many people have heard that AWS’ number one leadership principle is “Customer Obsession” and I can confirm this to be true. As such, AWS has a customer-centric approach to building. It is well known that “90% of what we build at AWS is driven by what customers tell us matters to them,” as is stated in this article, The Imperatives of Customer-Centric Innovation. My colleagues and I are working toward developing the building blocks, tools, and examples which empower our customers to build, deliver and manage spatial experiences at scale on AWS.

I feel strongly about summarizing and explaining concepts in short video snippets. I think it is an easy and digestible way to share information. I strive to always make videos simple enough to understand and yet be impactful to a very broad and diverse technical audience. My team and I recently created the video below to help describe what spatial computing is and to highlight what AWS is doing in this space.

At the same time, my team has adopted these four pillars to describe spatial computing enablement at AWS:

Infrastructure – AWS strives to have the widest and deepest set of building blocks to enable spatial experiences. We help customers innovate and protect their investments in IP, data, and processes by being the best place to build a spatial experience.

Partners & Tooling – AWS focuses on providing a broad ecosystem of partner and AWS solutions, giving customers a choice across the full stack, from SDKs to turn-key solutions, allowing customers maximum flexibility to incorporate spatial experiences.

Open Standards – AWS strives to reduce cost and increase efficiencies for customers and partners by relentlessly promoting open interfaces, standards, and being device agnostic.

People – AWS has technical experts with years of experience around key technologies, as well as Field Service teams.

My team believes that spatial computing is what powers a diverse set of core and supporting technologies and that it is these technologies that will enable your data to reach and impact so many users. We also believe that AWS is the best place to build spatial computing experiences due to AWS’ vast infrastructure, broad set of partners and tooling, insistence on promoting openness with data and hardware, and technical experts with deep spatial background. It is my team’s goal to make AWS the best place to build, deliver, and manage spatial computing workloads at scale.

In Bill Vass’ blog post, he spends some time discussing the importance of two of these pillars to the reader. Primarily, he highlights the importance of infrastructure and open standards. Regarding what enables spatial computing workloads and new capabilities, he states: “Above all else, open standards are key for protecting your investment by not getting boxed in to specific vendors or solutions. Focus on the data, not on the device. Remember, your data is the valuable part – data is the new oil.” The data that companies own and operate is priceless and it is that data that feeds these spatial computing workloads, allows us to gain valuable insights from simulations, and experience an immersive new world.

Conclusion

With all of this being said, how might we begin to refine the definition of spatial computing and work towards a consensus? I propose that we begin to think of spatial computing, 3D, the metaverse, and all the technologies on the Spatial Computing Spectrum as a means to simulate things, much like Werner Vogels noted in his closing keynote comments. How might you use simulation in your company? With your customers? What might you build to simulate things? I spoke about how all of these definitions are fluid so why not begin to think of all these technologies as simulation technologies? Gaming technologies to simulate real-life or other-world scenarios. 3D design technologies to simulate the visual aspects of newly designed concepts. Even the metaverse as a simulation technology, to simulate the real world. The possibilities are endless.

“Model the world,” Werner Vogels concludes, “now go build”.

Quelle:

Foto: Werner Vogels “3D will soon be as pervasive as video”