It’s something that connects us all: talking about the weather. And in recent years, there seems to be more and more to talk about, from violent hurricanes and record high temperatures to torrential flood-causing rain and devastating fires.

The Weather Channel television network has been bringing expert weather forecasts and analysis to viewers in the United States for nearly 40 years. Recently, the company has looked to new ways to engage and educate viewers, working with The Future Group in 2018 to create special immersive mixed reality segments on lightning and tornadoes, and later in the year on storm surges and wildfires.

Those one-off segments were extremely impressive, and garnered a multitude of press coverage and widespread praise for their ability to get across the reality of dangerous weather—one of them even winning an Emmy. However, they took significant time to plan and choreograph, and while they used real-time rendering courtesy of Unreal Engine, they did not go out live to air; instead, they were recorded live to tape a few hours before airtime and edited in post.

But what if The Weather Channel could bring live immersive mixed reality (IMR) to their forecasts every day? On June 2, 2020, the network debuted its new IMR weather studio with exactly this goal.

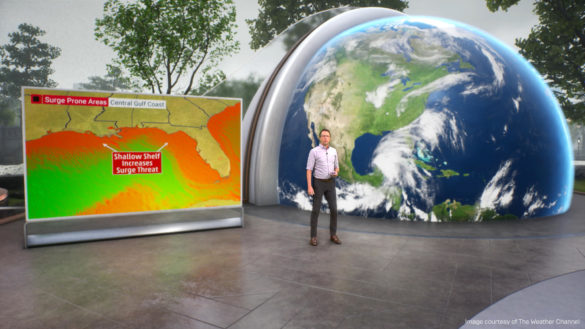

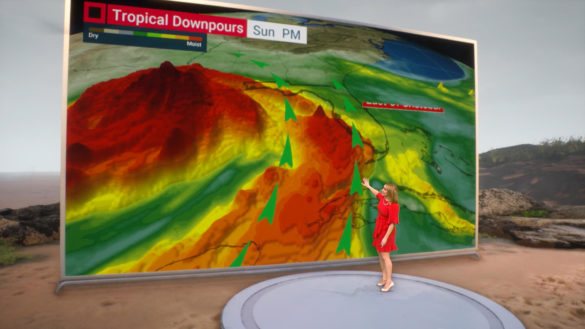

The new studio extends the physical space, so that half the studio is physical and the other half virtual, with presenters able to move seamlessly between the two halves. The virtual half has a glass wall at its boundary that can drop away to give the presenters access to the virtual environment that appears beyond the wall, offering them the ability to show the weather “outside” and even step into the space.

The environment changes to reflect the topic, becoming a coastal region if a hurricane is approaching, for example, or a mountainous region if the subject is a snow report for skiers. To bring these environments into the studio, a team captured HDR footage from 50 key locations across the country.

“It’s an incredibly valuable tool to help us enhance the storytelling,” says Mike Chesterfield, Director of Weather Presentation at The Weather Channel. “People really do respect the content—they can see themselves in those situations, because it’s so realistic.”

The studio also leverages live weather data to drive 3D charts and bar graphs, and even presents seven-day forecasts with a series of animated 3D MR weather elements—rain, clouds, sun, lightning, etc.—over virtual cities. It can bring up a 100-foot monitor at the click of a button, or show weather systems on a global scale, literally, with the help of a massive 3D virtual globe.

“This is the first truly useful full-time virtual studio that I’ve seen being used in an information network in the US,” says Chesterfield. “We’re doing totally new things for weather presentation, and we’re doing it all live.”

Behind the scenes: a look at the technology

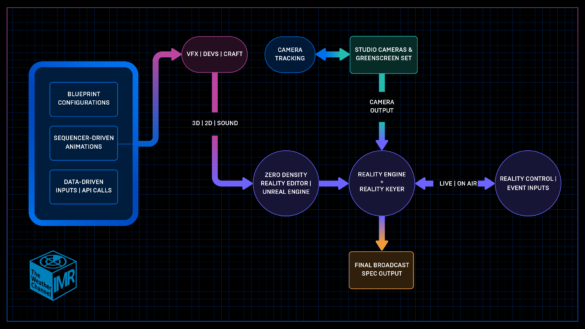

With the responsibility of integrating all the live elements into the virtual environment, the network’s Senior Technical Artist Warren Drones explains how everything comes together.

The studio’s pipeline features a traditional broadcast setup with cameras and a green screen set, running in parallel with Zero Density’s Reality Engine, an Unreal Engine-based real-time broadcast compositing system, and Reality Keyer, which Zero Density says is the world’s first and only real-time image-based keyer that works on the GPU. Instead of assuming a single color value for the entire background as with chroma keying, image-based keying compares the captured video with a clean plate, enabling subtle transparent details and shadows to be retained.

To create a clean plate for a moving camera, Reality Keyer provides the ability to create a virtual version of the green screen cyclorama background; a captured image of the physical green screen set is mapped onto this digital double. The tracking data from the camera (the network uses a Mo-Sys system) is piped into this node, enabling the system to create a clean plate for every frame.

The static elements of the virtual set draw heavily from the previous physical set; in fact, the team scanned the physical materials to ensure a perfect match. Props are created in 3ds Max and brought into Unreal Engine via FBX. For environmental elements, the team uses the extensive library of high-quality 2D and 3D Quixel Megascans, which are now free for all use in Unreal Engine.

VFX artists create effects such as rain, snow, fire, and water in Unreal Engine’s Niagara VFX system. Animations are driven by the Sequencer multi-track nonlinear editor. Live weather data imported through the API is used to drive 3D charts and graphs, and even to cause rain to fall or the sun to go behind a cloud.

The team makes extensive use of the Blueprint visual scripting system to author behaviors, script logic, and trigger events. In one example, the entire set can be reconfigured with a single click at a director’s instruction—live, on air. Directors can also change the lighting, switch to a different feed, cut to a new position, and so on, all in real time.

According to Drones, live virtual broadcasting changes the complexion of the production. When compared with what was done in the past, this latest approach to virtual content is more challenging, but at the same time brings a sense of immediacy that engages the viewer.

“Informational storytelling is best done in a live format,” he says. “You want your talent to give the latest info with genuine in-the-moment reactions that connect to the viewer. Prerecorded visual content limits the ability to deliver live storytelling in order to keep the graphics relevant. Our new live virtual studio removes this limitation.”

Drones is even more excited about the future of mixed reality broadcast after seeing the Unreal Engine 5 announcement.

“I was blown away by it,” he says. “The barrier of entry for development and 3D graphics has slowly been eroding away as time goes on, but in my opinion no other software solution in recent time has contributed to removing this barrier more than Unreal Engine. The Unreal 5 announcement was unconditional proof of that, and I’m very excited to hear more on it.”

Want to find out more about using Unreal Engine for producing live content? Check out our Broadcast & Live Events page.

Quelle:

https://www.unrealengine.com/en-US/spotlights/the-weather-channel-s-new-studio-brings-immersive-mixed-reality-to-daily-live-broadcasts