interest has always been advantaged by context and user intent. By cultivating shopping and product-discovery use cases, it sets itself up to monetize in native ways. And it has slowly leaned into that over the past few years with more transactional features for direct commerce.

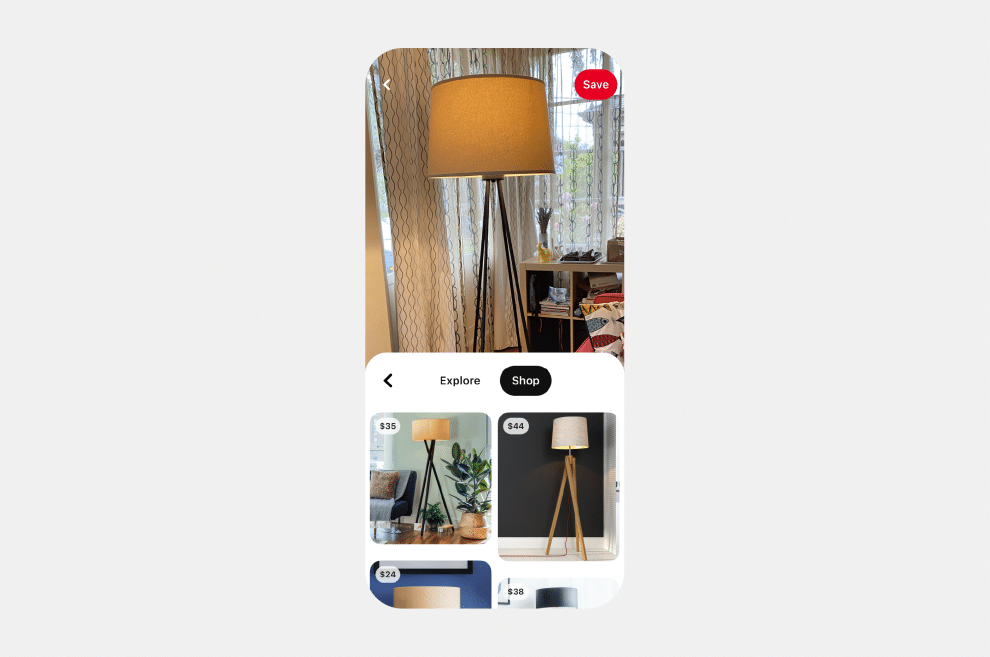

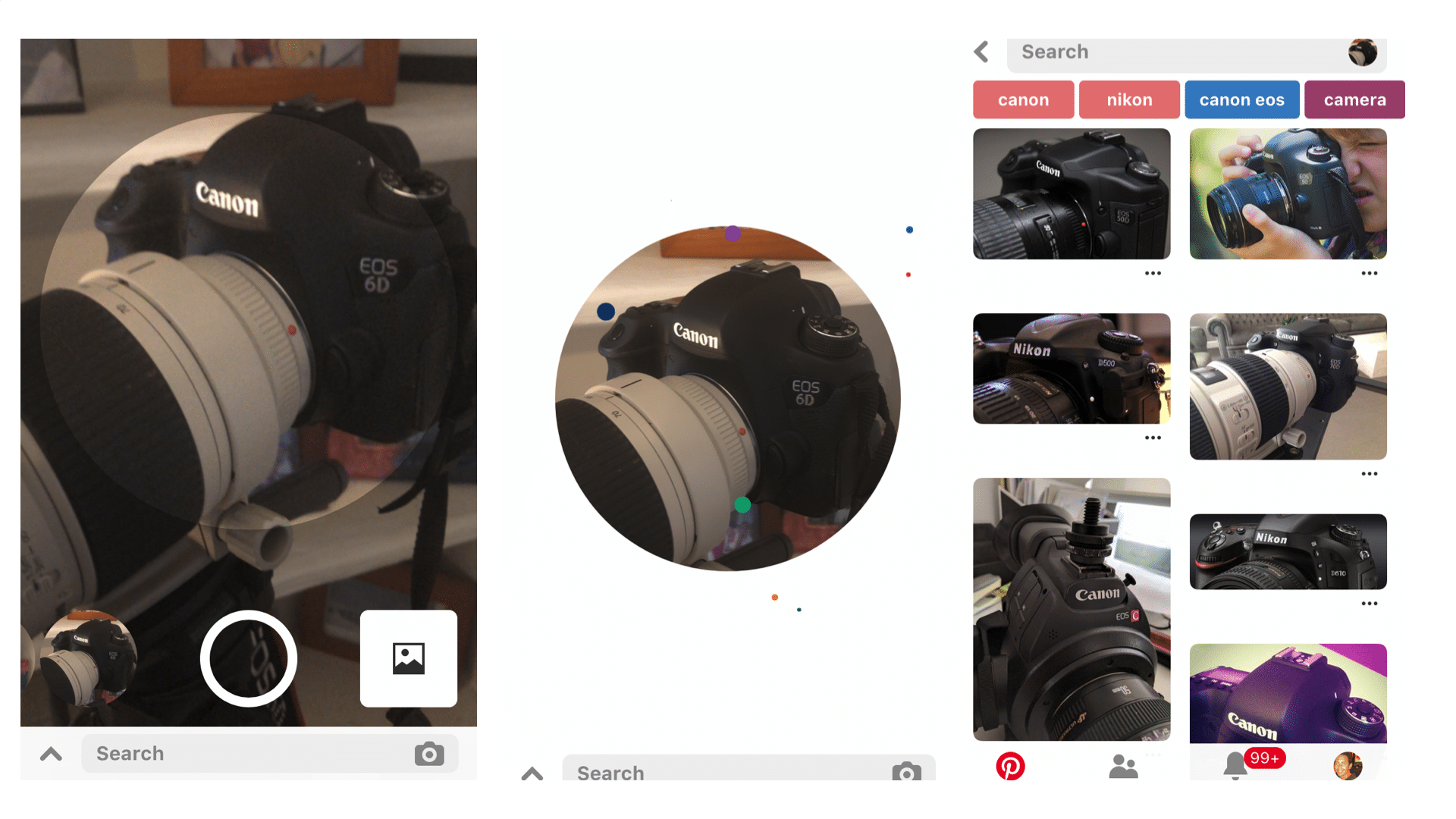

The latest move is Pinterest’s new Shop tab in its Lens search results. For those unfamiliar, Lens is Pinterest’s visual search play. Like Google Lens, it’s a “search what you see” proposition to point your phone at items in the real world to trigger Pinterest searches for visually-similar merch.

Users now see search results with Shoppable Pins — an existing Pinterest feature that makes items shoppable. What this does is marry two Pinterest use cases. The first is visual capability to search things you see; The second is transactional capability to buy things you search.

Visual Confirmation

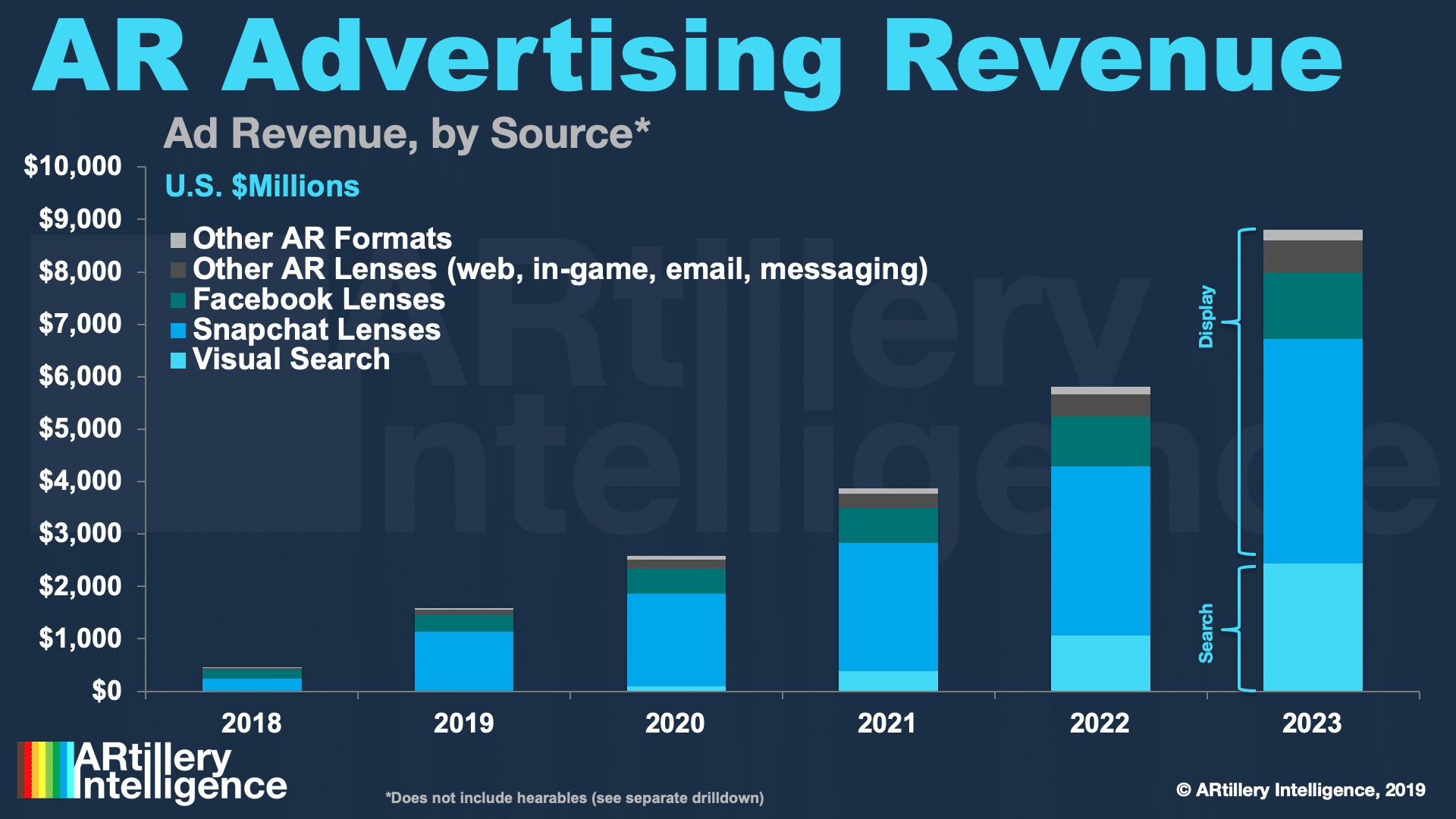

While launching the Shop feature in Lens results last week, Pinterest gave us some fresh data including the fact that year-over-year visual searches have tripled. During the same period, it’s also doubled the number of attributes it can detect in women’s fashion (think: style, texture, etc.)

It has also improved its matching accuracy by 50 percent. This joins some of the visual search milestones it’s already reported, such as the fact that it recognizes 2.5 billion products through its Lens feature. These numbers should grow as Pinterest is intent on doubling down on Lens.

This effort will be further validated and fueled by user response. 85 percent of consumers want visual content, 55 percent say visual search is instrumental in developing their style; 49 percent use it to develop brand relationships; and 61 percent report that it elevates in-store shopping.

This is all to say that it’s a logical step to integrate shoppable buttons in visual search results. Most of Pinterest’s work with Lens has been experimental to ensure it works, and that consumers are engaging. Transactional buttons are now a strong signal that it’s ready for prime time.

Make the World Pinnable

Stepping back, visual search aligns with Pinterest’s road map to essentially increase “inventory” by making the physical world pinnable. That’s particularly true in Pinterest-strong verticals like fashion, home goods and food, where these products surround us and trigger engagement.

Google is doing similar to future-proof its core search business with more modalities (including voice search). Its advantage is a broader range of visual subjects, given the AI training set from building the world’s largest image search engine, and years of knowledge-graph data.

But Pinterest may have an edge when it comes to the smaller subset of visual searches that are monetizable. Google Lens’ featured use cases so far involve general-interest subjects like pets and flowers. But Pinterest is all about food, fashion, and things that are inherently shoppable.

Of course, the wild card is Amazon. It has the largest product database of all, including images that can be used as a visual search training set. It’s also waded into the visual search pool, first with its oft-forgotten 2010’s Flow app, and more recently, its collaboration with Snap.

Will it Stick?

The remaining question is how visual search will gain traction with a broader base of mainstream consumers. Is holding up your phone easier than typing or speaking a search query? It depends on the search subject, as holding your phone up to lamp is more effective than describing it.

But visual search isn’t culturally a mainstream thing yet and requires a behavioral shift that’s physical in nature (holding up a phone). History has taught us this is a difficult and slow-moving process. And it will only apply in situations where the subject is in view/proximity, versus recalled.

The technology will continue to progress and gain traction as a feedback loop reinforces its value and reliability — especially among camera-native Gen Z who slowly gain buying power. Google will also continue to incubate visual search and plant it at easier touchpoints to acclimate users.

Meanwhile, Pinterest will likely evolve its UX. Pinterest Lens requires that you snap and upload pictures. Google Lens rather lets you hold up your phone for a live viewfinder, from which you can tap on items to search visually. It will have to be that easy for mass-adoption.

Quelle: