VR software has introduced many new challenges to developers. Among these challenges, rich interactions are at the very core of all the new elements VR software designers need to consider when creating games or general applications.

It’s no surprise that all the top selling VR games have something in common: their interactions are extremely polished and fun to play with. Games like Job Simulator (2016)paved the way of modern VR interaction while Beat Saber (2019) showed us that simple but engaging interactions can beat big studio productions in this medium as well.

Even though my background includes game development, I’ve spent most of my career developing training simulators. Currently I’m working in the enterprise VR market for training and, as you can imagine, realistic interactions here are key.

When developing a particular interaction, whether it is for games or for training, I always strive for three things: making it a really enjoyable experience, anticipate all kinds of interaction ‘misuses’, and polish it until everything feels right and then some.

Quality VR interactions are key to effective training because they are more engaging and authentic, developing new neural pathways that provide the ability to learn and perform new tasks. They work the same way good professors make lessons entertaining that keep you engaged, facilitating the assimilation of new knowledge. Bad interactions will cause frustration and decrease training efficacy.

The main challenge when developing VR interactions is the amount of freedom that the medium inherently presents to its users. In traditional videogames we use action buttons to interact with the world. The object and the context determine what will happen. In VR we use natural gestures instead; we pick things up like we would do in real-life, we manipulate complex tools, we can throw things around, all using our own hands.

This is a big paradigm shift in the way we design and implement interactions and presents a big challenge because users are inherently curious and they like freedom—especially in VR. Some will follow the ‘expected’ behaviors of the application, but others will be carried by their curiosity and inevitably test the limits of the world before them. Surprising the user by anticipating the creative ways that they interact with objects can make the experience more enjoyable and increase the sense of being in a cohesive world rather than a scripted experience.

In this article I will showcase some of the interactions I’ve developed and offer some thoughts on the design approach to each.

Lab Elements

I’ll start with a sci-fi lab that is part of a sandbox we developed internally to create and test new interaction mechanics.

The lamp on the left presents 3 handles that can be grabbed using a single hand or both hands at the same time, from the inside or the outside. It is attached to the world through a mechanical IK-driven arm that hangs from the ceiling which constrains its range of movement to a sphere and gives context for its ability to be placed in any position.

From all the changes that we made, adding haptics and smoothing out the movement of the lamp had by far the biggest impact on user experience. The smoothing filter conveys the feeling of mechanical resistance (that it’s not possible to move it around that easily) and adding haptics multiplies this feeling tenfold. It is also very gratifying to see how the mechanical arm follows the lamp around when you move it, and how it keeps swinging a little when you release it. These are little things that we add for no other reason than to keep the user happy and engaged.

The laser has a different IK setup. One of the things that we tried to experiment with is the rubber joint that joins the head with the arm and gives it two degrees of rotational freedom. We got the inspiration from the avatar wrist in the game Lone Echo and thought it was a cool way to model a ball joint.

The laser beam works by casting a ray from the tip and creating a polygon strip simulating the burn if the surface has been exposed long enough. Smoke particles help to add a bit more detail as well.

Lab Battery

This clip showcases a battery swap in the same sci-fi environment. The main purpose is to study objects with different constraints that need to be manipulated correctly in order to complete the task.

The first step is opening the door, while the object can only be rotated around its axis. Being anchored to the world has a very important consequence in the grab action: instead of the object snapping to the hand, it is the hand that will snap to the handle.

The second step is slightly more complex because it involves using both hands to unlock the mechanism that keeps the battery in place. If you try to pull out the battery without unlocking it first, the hand will maintain the grip or snap back to its position if pulled away too far.

Once the lock is open the battery can be extracted, which is accomplished by constraining the position allowing it to slide only along the tube until it comes free.

Drill

The first challenge when recreating a drill in VR is to determine the necessary conditions to start the drilling process. After all, there is no physical resistance in real-life that prevents someone from moving their hand through a wall in VR.

In this example, the conditions that are required are:

- The drill bit must be able to penetrate the material it’s pressed against

- The drill bit needs to be oriented at a correct angle against the surface.

- The drill needs to be slowly pushed against the surface when the user presses the trigger.

If the user tries to pull the tool any other way than out during the drilling process, the drill will be kept in place and the hand will snap back if it is moved too far away from it.

Subtle haptics play a big role in the interaction making things such as the drill rotation or the physical resistance of the medium more believable.

Cabling

This is a training and assessment application that lets users operate networking devices in a virtual datacenter. One very interesting feature is that all elements are synchronized in real-time with actual Cisco devices running in the cloud, creating a bridge between the virtual world and real-life.

The most challenging part was getting the cables right. To create each cable, we used a skinned mesh with 22 rigid bodies chained together using joint primitives. Physics-driven objects are always a potential source of bugs which made me spend a great amount of time making sure that cables would not only feel realistic but also would respond well to abuse.

Since there are many interactive elements so close to each other, it’s also very important to fine-tune many other details such as visual cues, haptic feedback, and smooth grab/release transitions. All these features working together can turn a complex interaction into something that feels natural.

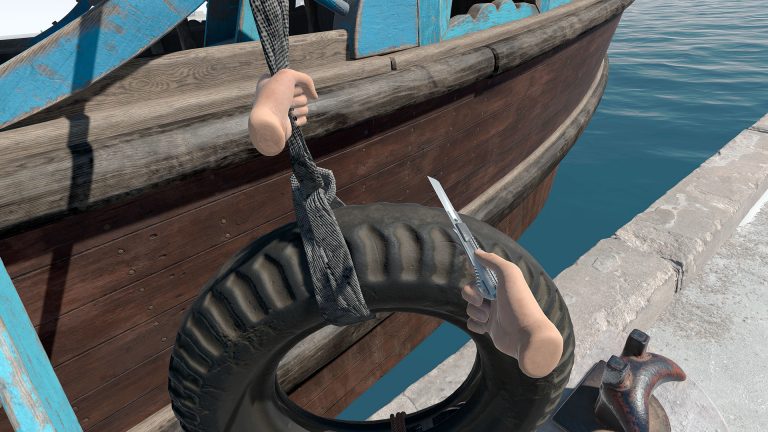

Rope & Tire

This interaction showcases part of a training session that requires the user to scan different elements looking for smuggled items.

Rope physics can be tricky to implement, and even more so in VR if you give the user freedom to swing them around. The rope in this video is modeled using approximately 20 bones that can be grabbed individually. The narrower part that goes through the hand is simulated by scaling the bone when it’s grabbed which makes the mesh shrink along that point. For such a simple operation it provides a significant visual impact.

The tire at the end makes it difficult to move the rope around, which increases the perceived weight. To simulate the haptic feedback I use the movement of the tire itself, sending signals that are proportional to the relative speed at which it is moving, an approach that works really well—especially when it swings around.

As you can see, developing interactions in VR is a detailed and challenging task, but one of the most rewarding for both developers and users. Compelling interactions in VR are usually the by-product of many different iterations until the result feels as natural and satisfying as possible.

Quelle:

Delighting Users with Rich Interactions is Key to Making VR Engaging & Effective